Transforming video monetization

Role

Lead designer

Duration

Q1 2023 - Q4 2024

Company

JW Player

Primary program

Figma

OVERVIEW

Dynamic Strategy Rules

JWPlayer's Dynamic Strategy Rules transformed video monetization for digital publishers. I led the design of this solution that streamlined how publishers manage video experiences across their sites.

The product cut implementation time from weeks to hours while boosting ad revenue up to 59%. More importantly, it gave publishers unprecedented control over their video experiences without requiring technical expertise.

BACKGROUND

Where it started

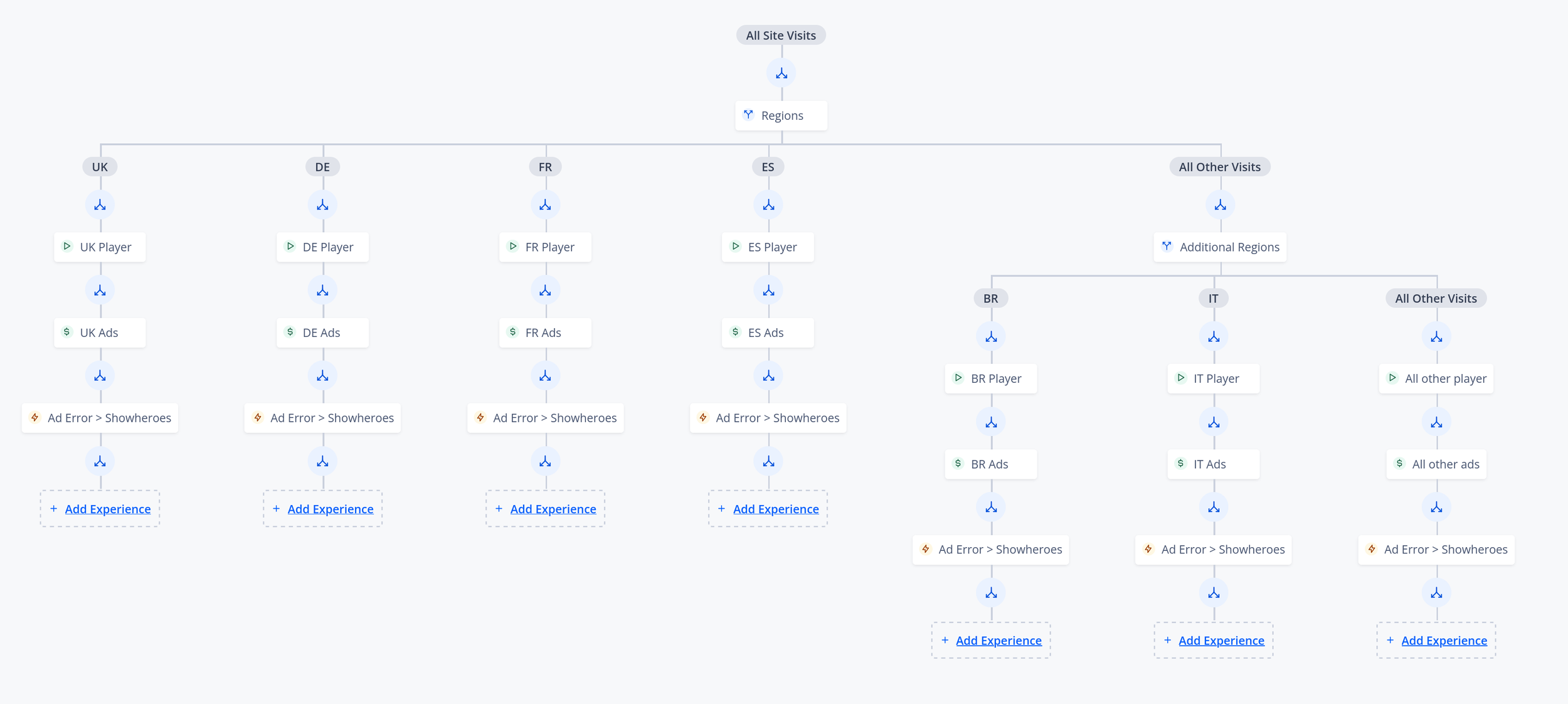

In Q3 2022, our engineering team began building the foundation of what would become Dynamic Strategy Rules. The initial vision, directed by our VP of Product, centered around creating a visual decision tree interface that would revolutionize how publishers implement video and monetization strategies.

I joined the project in Q1 2023, tasked with visualizing and refining this vision. The challenge? Transform a complex backend system into an intuitive interface that would allow publishers to implement sophisticated video strategies without heavy development resources.

Product Vision: A visual decision-tree interface

RESEARCH-DRIVEN FOUNDATION

Understanding our customer

User personas and their journeys

I interviewed customers and internal stakeholders to learn about the product users and the common problems publishers face. After identifying key challenges, I focused on the users of these tools, creating personas based on their daily tasks. I then revisited some interviewees to validate these personas and understand their work better. Are these the actual users of the tool? Where do their workflows connect, if at all?

RESEARCH-DRIVEN FOUNDATION

Customer challenges

Through extensive research and customer interviews, we identified three critical problems that publishers faced when managing their video strategies:

Complex Requirements Management

Publishers were struggling to balance competing needs: user experience, advertising requirements, and third-party integrations.

Resource-Heavy Coordination

Every change, even minor, demanded extensive coordination among engineering, ad ops, product, and editorial teams, making the process tiresome and lengthy.

Limited Flexibility

Existing tools were inadequate. Teams struggled to optimize ad placement and respond promptly to performance insights, hindering their ability to maximize revenue.

THE PROCESS - PHASE 1

Phased Implementation

This project was done in stages, and we improved features step by step based on user feedback and changing business requirements. The first stage focused on identifying the main features users needed to create and implement their video strategies.

Defining core concepts

With help from the product team, I began identifying what customers need to create a successful strategy. There were a few key elements for the user to define, starting with the essential components:

Placements

“Real estate” on a publisher’s site where the appropriate strategy would be executed.

Strategy

Strategies enable you to deploy customized content and advertising experiences based on the viewer’s context. A Strategy is made of a combinations of Conditions and Experiences.

Conditions

Split branches by percentages, geography, device, and other user visit data.

Content Experience

Includes player settings, add playlists, and a some advanced features.

Advertising Experience

Configure your video advertising settings, set up Ad Breaks, and enable Player Bidding here.

In this important phase, rules were set. Some items couldn't be added to the strategy canvases unless specific nodes were already present. For instance, a user couldn't add an Advertising Experience without having a Content Experience already established in the branch.

Journey map diagram with appropriate rules

THE PROCESS - PHASE 2

Beta Phase

With key ideas in place and initial explorations underway, I could make some choices. Some features would resemble parts of the dashboard, so I focused on the tree's appearance, how experiences and conditions connect, and how to integrate them into the tree. I began to envision how the tree would look and work.

Preliminary sketches of adding elements to tree canvas

Early tree UI explorations

Design system update! While working on design, the company acquired a payments platform, so we needed to merge design systems. This meant redesigning parts for Dynamic Strategies. I updated the designs to fit the new system, ensuring consistency across the JW Player platform.

Updated designs used for user testing

User testing

User testing highlighted areas for improvement

With the design changes implemented, I wanted to validate them since this is a new product for the company. User testing was essential to meet user needs.

What was I looking for? I needed validation and user feedback around terminology and concept understanding from the user. I needed to see if the flow made sense. I needed to see if people understood what they were building, if this tool made sense for what they were doing. Needed yo understand if this new tool will accelerate their work.

This testing provided some very useful insights. Some glaring issues were highlighted and needed to be addressed such as:

This is a bit complicated to use. This tool will be used by people of all technical backgrounds and this needs to be kept in mind.

Users did not understand the core elements of the strategy.

Confusion around settings.

UI was hard to understand. The users had a hard time tracking where they were in the tree.

THE PROCESS - PHASE 3

Alpha phase

We started with an Alpha phase instead of a full launch, choosing a key customer partner to test the tool and provide feedback. This helped us collect useful data on its performance and find areas to improve. During this phase, we added major upgrades, such as automation triggers and better traffic management. We kept in close touch with our early users, setting up regular feedback sessions to help us focus on improvements and discover new optimization chances. This ongoing communication ensured we were building what our users truly wanted.

We made gradual UI improvements to the decision tree. User testing feedback showed that users wanted to see a summary of the node content. I also believed that changing the branch labels would help clarify their meanings.

⬆️ Before (Beta phase)

⬆️ Tree UI improved

⬆️ Content Experience page

⬆️ Advertising Experience page

THE PROCESS - PHASE 4

Improvements

More improvements to the tree.

Ran a workshop between design and engineering to come up with solutions to simplify creating a Strategy.

Workshop was a success

Solution from the workshop was a Reusable Component Library concept. To help simplify to concepts more, The Advertising and Content Experience configuration were merged to become the “JWP Video Experience”.

Advertising configuration page

Media Curation configuration page

JWP Video Experience

IMPACT & RESULTS

Results don’t lie

Within the first three months of implementation, customers saw

59% lift in revenue

Average ad impressions per embed saw a

129% increase

Majority of publishers saw a

10% increase in video views

Reduced codebase by 10,000+ lines

Simplified deployment to a single line implementation

4 hour implementation

Implementation time for new video strategies dropped from an average of 2-3 weeks to 4 hours.

LOOKING BACK

Key learnings

Start with the Right Problem

Early in the project, I noticed we were focusing too heavily on the interface challenges when the real problem was workflow bottlenecks. By stepping back and reframing our approach around team coordination, we created a more impactful solution. This taught me to always validate that we're solving the core problem, not just its symptoms.

Test at Different Scales

Through our phased rollout, we discovered that what worked for major publishers often overwhelmed smaller teams. This insight led us to develop scalable solutions that could grow with our users. Now I always ensure testing includes diverse user scales and scenarios.

Balance Power with Simplicity

Our biggest challenge was making complex functionality accessible without oversimplifying. Through multiple iterations, we found that progressive disclosure—showing basic options first but making advanced features readily available—was key to satisfying both novice and power users.

LOOKING FORWARD

Future enhancements

The success of Dynamic Strategy Rules opened up several exciting opportunities for future enhancement. A few features that I would have liked to explore and add to the tool are:

Workflow Intelligence

Future iterations could incorporate learning capabilities that adapt to each team's unique workflow patterns. This could include personalized shortcuts, automated routine tasks, and predictive suggestions based on past actions.

Enhanced Analytics

The next evolution of our tool could provide deeper insights into strategy performance, helping publishers understand not just what's working, but why. By connecting more data points, we could help publishers make more informed decisions about their video experiences.

Smarter Automation

We're exploring ways to leverage usage data to suggest optimal strategy configurations based on publisher goals and audience behavior. Imagine the system automatically recommending adjustments based on performance patterns across similar publishers.